Program

DAY-1 : Jan 29

| Time | Program |

|---|---|

| 9:00 - 9:20 |

Opening + Message from MEXT |

| 9:20 - 10:10 |

Keynote 1 Satoshi Matsuoka (RIKEN, R-CCS) |

| 10:10 - 10:30 |

Break |

| 10:30 - 11:05 |

Quantum Science Invited Talk Wibe Albert de Jong (Lawrence Berkeley National Laboratory) |

| 11:05 - 11:55 |

Quantum Science Kae Nemoto (OIST) Suguru Endo (NTT) |

| 11:55 - 13:30 |

Lunch Fugaku Tour B (Exhibition area, no application required) |

| 13:30 - 14:05 |

Science by Computing: Classical, AI/ML Invited Talk Jan Kosinski (EMBL, Hamburg) |

| 14:05 - 14:55 |

Science by Computing: Classical, AI/ML Bruno Adriano (Tohoku University) Marie Oshima (IIS, University of Tokyo) |

| 14:55 - 15:15 |

Break |

| 15:15 - 15:50 |

FS Invited Talk Eric Monchalin (Eviden) |

| 15:50 - 16:40 |

FS Masaaki Kondo (RIKEN, R-CCS) Takeshi Iwashita (RIKEN, R-CCS | Kyoto University) |

| 16:40 - 17:00 |

Group Photo |

| 17:00 - 18:20 |

Poster Session Reception hall (3rd floor) |

| 18:30 - 20:00 |

Reception Reception hall (3rd floor) |

DAY-2 : Jan 30

| Time | Program |

|---|---|

| 9:00 - 10:20 |

Fugaku Tour A (Computer room, application required) |

| 10:20 - 11:10 |

Keynote 2 Mikael Johansson (CSC Finland) |

| 11:10 - 11:45 |

Quantum computing Invited Talk Mitsuhisa Sato (RIKEN, R-CCS) |

| 11:45 - 12:10 |

Quantum computing Wataru Mizukami (Osaka University) |

| 12:10 - 13:45 |

Lunch Fugaku Tour B (Exhibition area, no application required) |

| 13:45 - 14:20 |

Science of Computing: Classical, AI/ML Invited Talk Michela Taufer (University of Tennessee) |

| 14:20 - 14:55 |

Science of Computing: Classical, AI/ML Invited Talk Rick Stevens (Argonne NL | U Chicago) |

| 14:55 - 15:20 |

Science of Computing: Classical, AI/ML Makoto Taiji (RIKEN, BDR) |

| 15:20 - 15:40 |

Break |

| 15:40 - 17:00 |

Panel Discussion |

| 17:00 - 17:10 |

Closing |

All oral presentations and panel discussion will be held in the conference room (3rd floor)

Program

- Keynote 1 (DAY-1 : Jan 29 9:20 - 10:10)

-

- Satoshi Matsuoka (RIKEN, R-CCS)

-

Computing for the Future at RIKEN R-CCS: AI for Science, Quantum-HPC Hybrid, and FugakuNEXT

-

At RIKEN R-CCS, the legacy of Fugaku, our flagship supercomputer, is just the beginning. We're embarking on an ambitious journey to redefine the landscape of high-performance computing, with a keen focus on societal impact and scientific innovation. Our roadmap includes several groundbreaking projects that promise to elevate our capabilities and contributions to unprecedented levels. Central to our strategy is the "AI for Science" initiative, a project that places artificial intelligence at the heart of scientific research. This endeavor aims to harness the power of AI to decipher complex data, accelerate discovery processes, and provide deeper insights across various scientific domains. By integrating AI with supercomputing, we're not just enhancing computational efficiency; we're transforming the very paradigm of scientific exploration. In parallel, we're excited about the development of "FugakuNEXT," the successor to Fugaku. This next-generation supercomputer will incorporate advanced technologies, including innovative memory solutions designed to drastically reduce the energy consumption associated with data movement, a critical challenge in scaling supercomputing capabilities. Moreover, our commitment to expanding the frontiers of computability extends to the realm of Quantum-HPC Hybrid computing. This pioneering project aims to merge the quantum computing's unique capabilities with the robust power of traditional high-performance computing, opening new avenues for solving previously intractable problems. Recognizing the importance of accessibility and flexibility in computing resources, we're also integrating our supercomputing assets with cloud platforms, notably AWS. This strategic move will democratize access to supercomputing power, enabling a broader range of researchers to tackle pressing global challenges with greater agility and scalability. Together, these initiatives represent RIKEN R-CCS's vision for the future—a future where supercomputing is not just about raw computational power, but about enabling a more profound understanding of the natural world, driving innovation, and contributing solutions to some of the most pressing issues facing humanity today.

- Quantum Science Invited Talk 1 (DAY-1 : Jan 29 10:30 - 11:05)

-

Session Chair: Takahito Nakajima (RIKEN, R-CCS)

- Wibe Albert de Jong (Lawrence Berkeley National Laboratory)

-

Towards practical applications on quantum computers

-

Quantum computing has the potential to develop as an experimental and computational platform for physics, chemistry, materials science, and biology. Considerable progress has been made in hardware, software and algorithms that allow us to probe practical applications with quantum computers, and make scientific discovery a reality. In this talk, I will discuss some of the recent developments in quantum computing algorithms to simulate the complex many-body systems common in physical sciences. To obtain reliable results from NISQ quantum computers, error mitigation, and reduction of computational complexity are essential. I will highlight some of our efforts to enable reliable simulations on quantum hardware.

- Quantum Science (DAY-1 : Jan 29 11:05 - 11:55)

-

Session Chair: Takahito Nakajima (RIKEN, R-CCS)

- Kae Nemoto (OIST)

-

Quantum Dynamics and Machine Learning

-

There has been a huge world-wide effort to find problems which noisy intermediate scale quantum (NISQ) processors can easily solve. However it turns out to be rather difficult to find practical problems such processors are really good at. In this talk, we will address this issue by asking ourselves a simple question: how should we ask questions to noisy quantum computers? We will first introduce the concept of quantum extreme reservoir computation (QERC), which can solve various classification problems with high accuracy using as few as ten qubits. Then we will detail the advantages and disadvantages of QERC and discuss how quantum dynamics classify input states.

- Suguru Endo (NTT)

-

Quantum algorithms based on post-processing: Quantum error mitigation and hybrid tensor networks

-

The computation ability of quantum computers catches a significant amount of attention; however, the scalability of quantum computers is still limited and also incurs a non-negligible effect of computation noise due to environmental interactions. In this talk, we explain the overview of methods to expand simulated quantum systems, i.e., hybrid tensor networks (HTNs) and quantum error mitigation (QEM) methods for error suppression. Then, we report our recent progress in these fields. For HTNs, we show how the transition matrices can be computed, which is necessary in material science and chemistry. Also, we report our formulation of noisy HTNs, which reflects the reality. For QEM, we introduce quite a general formalism called generalized quantum subspace expansion, which is a unified framework of quantum error mitigation methods. We show that this method even enables the simulation of larger quantum systems as well as mitigating noise.

- Science by Computing: Classical, AI/ML Invited Talk (DAY-1 : Jan 29 13:30 - 14:05)

-

Session Chair: Florence Tama (RIKEN, R-CCS)

- Jan Kosinski (EMBL, Hamburg)

-

Integrative structural biology in the era of accurate AI-based structure prediction

-

Macromolecular assemblies, comprising varied configurations of proteins and nucleic acids, are fundamental to biological processes. These assemblies vary in complexity, displaying configurations that range from simple dimers to intricate structures with numerous subunits. The elucidation of their structures is pivotal for decoding functional mechanisms and interactions. Integrative structural biology amalgamates complementary techniques, including electron microscopy, X-ray crystallography, chemical crosslinking, and computational modeling, to construct comprehensive structural representations of these assemblies. I will present our work on integrative computational modeling of macromolecular assemblies and how our approaches changed following the emergence of artificial intelligence structure prediction programs such as AlphaFold. Through real case examples, I will showcase the enhanced modeling pipelines and their applications in resolving complex biological structures.

- Science by Computing: Classical, AI/ML (DAY-1 : Jan 29 14:05 - 14:55)

-

Session Chair: Florence Tama (RIKEN, R-CCS)

- Bruno Adriano (Tohoku University)

-

Application of AI and physics-based modeling to enhance disaster science

-

One of the most important aspects, when a disaster happens is accurately understanding the intensity of the catastrophe in real time. This information will lead to efficient post-disaster response and relief efforts. The forecast system comprises rapid numerical simulation with a High-Performance Computing (HPC) Infrastructure. Modern machine learning models can learn complex patterns from large computed datasets and enhance HPC-based forecasting systems. Here, a fusion of AI algorithms and physics-based modeling for rapid prediction of disaster intensity is presented.

- Marie Oshima (IIS, University of Tokyo)

-

New perspective on a patient-specific blood flow simulation with a machine-learning technique for clinical applications

-

Since a patient-specific simulation uses medical image data such as CT or MRI, quantifying an impact of uncertainties in medical images on simulated quantities is an essential task to obtain reliable results for clinical applications. In general, uncertainty quantification requires a large number of case studies to investigate the effects of uncertainties in a probabilistic manner. Thus, a machine-learning approach is an effective way to conduct quantification of uncertainties. The uncertainty quantification will be presented to investigate a risk of CHS (Cerebral Hyperfusion Syndrome) conditions using patient-data.

- Feasibility Study Invited Talk (DAY-1 : Jan 29 15:15 - 15:50)

-

Session Chair: Kentaro Sano (RIKEN, R-CCS)

- Eric Monchalin (Eviden)

-

Will HPC be a next decade disruptor, or will it be disrupted?

-

Supercomputing is at the forefront to solve societal, academic, and business challenges such as climate change, energy, decarbonation, sustainability, smart everything (cities, mobility, agriculture, medicine, manufacturing and so on), and many others. Supercomputing thus plays an essential role for any continent, economical space or nation which wants to tackle these numerous challenges and strengthen its leadership and sovereignty. However, the future of the HPC ecosystem itself is a daunting challenge. It has to overcome human and technology roadblocks to unlock its long-term potential, which includes, amongst others:

- Making scientific education and expertise great again,

- Fast energy transition to electricity foreshadowing a mismatch between supply and demand,

- Slowdown of Moore’s law,

- GPU trend favoring the performances of deep learning workloads.

The European Union, with its long and strong history in mathematics and science, can draw on its own strengths to free itself from these obstacles. Relying on its EuroHPC Joint Undertaking initiative, it is developing long-term and sovereign supercomputing technologies that can compete on the global HPC market. These key ingredients will support the European Union to equip itself with a world-class supercomputing infrastructure during the course of the decade and beyond.

- Feasibility Study (DAY-1 : Jan 29 15:50 - 16:40)

-

Session Chair: Kentaro Sano (RIKEN, R-CCS)

- Masaaki Kondo (RIKEN, R-CCS)

-

Introduction of the Feasibility Study Project for Next-Generation Supercomputing Infrastructures

-

The demand of high-performance computing is growing as it becomes an indispensable framework for science and AI. It is already time to consider architecture, system software, and applications for next-generation supercomputer systems beyond exascale. There are many technical challenges towards development of next generation systems as we are now facing the end of Moore's law. Together with various domestic and international partners, we have been conducting a feasibility studies on next-generation supercomputing infrastructures as a part of a national project by the Ministry of Education, Culture, Sports, Science and Technology (MEXT) Japan. In this talk, we introduce an overview and the recent status of Riken's feasibility study as a system research team.

- Takeshi Iwashita (RIKEN, R-CCS | Kyoto University)

-

Introduction of application group activities of RIKEN FS team and a prospective on next-generation applications

-

In this talk, activities of the RIKEN FS application group since 2022 are introduced. A plan for 2024 of the group is also explained. Then the speaker's personal opinion for next generation applications and expected features for computing systems. His latest research result of a linear iterative solver for GPUs is also briefly introduced.

- Keynote 2 (DAY-2 : Jan 30 10:20 - 11:10)

-

Session Chair: Nobuyasu Ito (RIKEN, R-CCS)

- Mikael Johansson (CSC Finland)

-

Setting up a distributed HPC+QC network: The nitty-gritty details

-

Despite the potential superior performance of quantum computers for some tasks, they rely on traditional computers for various tasks. With increasing performance on the quantum computing side comes an increased need for matching classical computing power. An efficient HPC+QC integration provides a two-way feedback loop, where HPC systems gain from a QC component and quantum computers are enhanced by supercomputing. Setting up an HPC+QC infrastructure is far from trivial, when the goal is more than trivial functionality and performance. Here, I will discuss different aspects of the required components, ranging from user authentication, co-scheduling, and data processing at different stages of the computational workflows. Compared to pure HPC infrastructure, QC brings added complexity through the scarcity of resources and the extreme heterogeneity of user base. End-users need access to several different implementations of quantum-accelerated supercomputing. The distributed LUMI-Q concept will serve as an example and basis for a general discussion.

- Quantum Computing Invited Talk (DAY-2 : Jan 30 11:10 - 11:45)

-

Session Chair: Nobuyasu Ito (RIKEN, R-CCS)

- Mitsuhisa Sato (RIKEN, R-CCS)

-

Quantum HPC hybrid computing platform project in RIKEN R-CCS

-

As the number of qubits in advanced quantum computers is getting larger over 100 qubits, demands for the integration of quantum computers and HPC are gradually growing. RIKEN R-CCS has been working on several projects to build a platform which integrates quantum computers and HPC together. Recently, we have started a new project funded by NEDO titled “Research and Development of quantum-supercomputers hybrid platform for exploration of uncharted computable capabilities”. In this project, we are going to design and build a quantum-supercomputer hybrid computing platform which integrates different kinds of quantum computers, IBM and Quantinuum, with supercomputers including Fugaku. In this talk, the overview and plan of the QC-HPC hybrid computing platform projects in R-CCS will be presented.

- Quantum Computing (DAY-2 : Jan 30 11:45 - 12:10)

-

Session Chair: Nobuyasu Ito (RIKEN, R-CCS)

- Wataru Mizukami (Osaka University)

-

Development of quantum software at the quantum research center QIQB of Osaka University and its application to chemical problems

-

In recent years, quantum computers have undergone rapid development. Our institute, the Center for Quantum Information and Quantum Biology (QIQB) at Osaka University, has been organizing a research hub for quantum software in Japan, developing a full stack of software required for their use. This talk will first give an overview of software development for quantum computers at QIQB. We will then present the challenges and our efforts in applying quantum computing to chemistry, a field expected to be a promising application of this technology.

- Science of Computing: Classical, AI/ML Invited Talk (DAY-2 : Jan 30 13:45 - 14:20)

-

Session Chair: Mohamed Wahib (RIKEN, R-CCS)

- Michela Taufer (University of Tennessee)

-

Analytics4NN: Accelerating Neural Architecture Search through Modeling and High-Performance Computing Techniques

-

This talk addresses challenges and innovations in Neural Architecture Search (NAS) within high-performance computing. Focusing on the substantial computational demands of designing neural network (NN) architectures, we present Analytics4NN, a unified solution combining advanced modeling and high-performance computing techniques to enhance NAS efficiency. Analytics4NN introduces a novel fitness prediction engine alongside a composable workflow. It leverages parametric modeling for early fitness prediction of NNs, seamlessly integrating with existing NAS methods to form more flexible and efficient workflows. This strategy enables the early termination of less promising NNs, optimizing the use of computational resources and increasing the evaluation scope of NN models. Demonstrated on the Summit supercomputer, Analytics4NN shows a remarkable increase in throughput, up to 7.1 times, and a reduction in training time by as much as 5.3 times across diverse benchmark datasets and three state-of-the-art NAS implementations. Additionally, Analytics4NN's approach to distributed training and rigorous documentation significantly aids in the efficient design of NNs. Applied to a dataset generated by an X-ray Free Electron Laser (XFEL) experiment simulation, it reduced training time by up to 37%. It decreased the required training epochs by up to 38%. Analytics4NN represents a significant leap in the scalability and efficiency of NN design for scientific computing, effectively accelerating NAS by combining cutting-edge modeling with robust, high-performance computing techniques.

- Science of Computing: Classical, AI/ML Invited Talk (DAY-2 : Jan 30 14:20 - 14:55)

-

Session Chair: Mohamed Wahib (RIKEN, R-CCS)

- Rick Stevens (Argonne NL | U Chicago)

-

The Decade Ahead: Building Frontier AI Systems for Science and the Path to Zettascale

-

The successful development of transformative applications of AI for science, medicine and energy research will have a profound impact on the world. The rate of development of AI capabilities continues to accelerate, and the scientific community is becoming increasingly agile in using AI, leading to us to anticipate significant changes in how science and engineering goals will be pursued in the future. Frontier AI (the leading edge of AI systems) enables small teams to conduct increasingly complex investigations, accelerating some tasks such as generating hypotheses, writing code, or automating entire scientific campaigns. However, certain challenges remain resistant to AI acceleration such as human-to-human communication, large-scale systems integration, and assessing creative contributions. Taken together these developments signify a shift toward more capital-intensive science, as productivity gains from AI will drive resource allocations to groups that can effectively leverage AI into scientific outputs, while other will lag. In addition, with AI becoming the major driver of innovation in high-performance computing, we also expect major shifts in the computing marketplace over the next decade, we see a growing performance gap between systems designed for traditional scientific computing vs those optimized for large-scale AI such as Large Language Models. In part, as a response to these trends, but also in recognition of the role of government supported research to shape the future research landscape the U. S. Department of Energy has created the FASST (Frontier AI for Science, Security and Technology) initiative. FASST is a decadal research and infrastructure development initiative aimed at accelerating the creation and deployment of frontier AI systems for science, energy research, national security. I will review the goals of FASST and how we imagine it transforming the research at the national laboratories. Along with FASST, I’ll discuss the goals of the recently established Trillion Parameter Consortium (TPC), whose aim is to foster a community wide effort to accelerate the creation of large-scale generative AI for science. Additionally, I’ll introduce the AuroraGPT project an international collaboration to build a series of multilingual multimodal foundation models for science, that are pretrained on deep domain knowledge to enable them to play key roles in future scientific enterprises. RIKEN and R-CCS are key partners in the TPC and AuroraGPT projects.

- Science of Computing: Classical, AI/ML (DAY-2 : Jan 30 14:55 - 15:20)

-

Session Chair: Mohamed Wahib (RIKEN, R-CCS)

- Makoto Taiji (RIKEN, BDR)

-

Outline of RIKEN’s AI for Science project and the development of the strong scaling accelerator

-

In this talk, I will talk on two topics. The first one is AI for Science project “TRIP-AGIS” that will start from this year. The aim of the project is developments and applications of multimodal foundation models for science. We especially focus on life science and material science, and the project includes (1) the generations of multimodal data by advanced measurements (2) developments of multimodal foundation models using HPC and (3) researches on autonomous research system using foundation models and robotics / simulations. We will also explore computational aspects for training and inference of large-scale AI models from the viewpoints of processor architecture, software and application pipelines.

The second topic is the development of strong-scaling accelerator for MD simulations. We are currently developing MDGRAPE-5, a special-purpose computer system for MD using FPGA. It has a hardware support for middle-grain data-flow processing to minimize latencies in calculation flow. We will describe its outline and future possibilities of strong-scaling accelerators.

- Panel Discussion (DAY-2 : Jan 30 15:40 - 17:00)

-

Panel Discussion: Synergy between Classical Computing, Quantum Computing, and AI: Current state, challenges, and future prospects

Moderator: Michela Taufer (University of Tennessee)

Panelists:

- Rick Stevens (Argonne NL | U Chicago)

- Kae Nemoto (OIST)

- Eric Monchalin (Eviden)

- Makoto Taiji (RIKEN, BDR)

List of Accepted Posters

- 2Randomized-HOTRG and minimally-decomposed TRG

Katsumasa Nakayama (RIKEN R-CCS)* - In this poster, we introduce the cost reduction method for the higher-order tensor renormalization group (HOTRG) and its extension. Any TRG methods based on the singular value decomposition (SVD) as the approximation of the decomposition and contraction. The Randomized-SVD is well-known method to reduce the cost, and we apply it to the HOTRG as the approximation of the contraction. We also introduce further cost reduction method using low-order tensor representation called minimally decomposed TRG. All of these methods achieve the comparable precision compare to the HOTRG, with the much less computational cost.

- 3Toward 3D precipitation nowcasting by fusing NWP-DA-AI: application of adversarial training

Shigenori Otsuka (RIKEN R-CCS)*; Takemasa Miyoshi (RIKEN R-CCS) -

Recent advances of deep learning allowed us to seek for new data-driven algorithms to predict precipitation based on past observations by weather radars. In parallel, high-end supercomputers enabled us to perform “big data assimilation,” rapidly-updated numerical weather prediction at high spatiotemporal resolution by assimilating dense and frequent observations such as the Phased Array Weather Radar (PAWR) (e.g., Miyoshi et al. 2016 a, b, Honda et al. 2022a, b). In conventional precipitation nowcasting, blending of numerical weather prediction and extrapolation-based nowcasting is known to outperform either of these (e.g., Sun et al. 2014).

We have been testing a convolutional long short-term memory (ConvLSTM, Shi et al. 2015) - based neural network. Recently, an adversarial training is considered a promising technique for deep learning-based precipitation nowcasting to avoid blurring effect (Ravuri et al. 2021). Therefore, we applied an adversarial training to a three-dimensional extension of ConvLSTM with PAWR. Preliminary results indicate that the use of adversarial loss increases small-scale features compared to thetraining without the adversarial loss. In future, a numerical weather prediction output will be fed to the network to combine it with a deep learning-based prediction in a nonlinear manner. - 4Mathematical analysis of the ensemble transform Kalman filter for chaotic dynamics with multiplicative inflation

Kota Takeda (RIKEN R-CCS)*; Takashi Sakajo (Kyoto University) -

Data assimilation is a statistical method used for estimating the hidden state of a system

over time, also known as a filtering problem. It outlines the two primary steps in a data assimilation

cycle: prediction and analysis. In the prediction step, model dynamics propagate the filtering

distribution, while in the analysis step, new observations are incorporated into the estimation. We

focus on studies of the ensemble Kalman filter (EnKF), usually applied for nonlinear dynamics.

EnKF employs an empirical distribution of samples, termed an ensemble, to estimate nonlinear

propagation. It is categorized into two algorithms based on the method of producing the analysis

ensemble: the perturbed observation (PO) method, a simpler but stochastic approach, and the

ensemble transform Kalman filter (ETKF), a more complex but deterministic method.

A key challenge in EnKF is the underestimation of the covariance matrix due to the finite size ensemble, leading to unstable filtering. Ad-hoc techniques like additive and multiplicative covariance inflations are used to address this. Kelly et al. established a uniform-in-time error bound for the PO method using additive inflation in dissipative chaotic dynamics, including the two- dimensional Navier-Stokes equations. However, the ETKF, due to its complexity, has not been analyzed similarly. Thus, our study aims to obtain an error bound for the ETKF in chaotic dynamics with multiplicative inflation. - 5Exploring the flavor structure of quarks and leptons with reinforcement learning

Satsuki Nishimura (Kyushu University)*; Coh Miyao (Kyushu University); Hajime Otsuka (Kyushu University) - The Standard Model of particle physics describes the behavior of elementary particles with high accuracy, but there are many problems. For example, the Standard Model does not explain the background of mass hierarchy of matter particles. In addition, the difference of flavor mixing between quarks and leptons is also mysterious. Then, we propose a method to explore the flavor structure of quarks and leptons with reinforcement learning, which is one type of machine learning methods. As a concrete model, we focus on the Froggatt-Nielsen model, and utilize a basic value-based algorithm for the model with U(1) flavor symmetry. By training neural networks on the U(1) charges of quarks and leptons, the agent finds 21 models to be consistent with experimentally measured masses and mixing angles of quarks and leptons. In particular, we define the intrinsic values to evaluate consistency with experimental data, and intrinsic values of normal ordered neutrino masses tend to be larger than that of inverted ordering. In other words, the normal ordering is well fitted with the current experimental data in contrast to the inverted ordering. Moreover, a specific value of effective mass for the neutrinoless double beta decay and a sizable leptonic CP violation induced by an angular component of flavon field are predicted by autonomous behavior of the agent. Thus, our finding results indicate that the reinforcement learning can be a new method for understanding the flavor structure. The reference is JHEP12(2023)021 (arXiv:2304.14176 [hep-ph]).

- 6TEZip Integration in LibPressio: Bridging Dynamic Application Capabilities with a Static C Environment

Amarjit Singh (RIKEN)*; Kento Sato (RIKEN) -

Big data refers to wide datasets characterized by complex structures, requiring advanced

methods for collection, analysis, and subsequent processing. Research in this domain investigates

the details of handling considerable data volumes, with a focus on big data analysis. Accurate

analysis and execution of data are essential in big data computation. The exponential growth of

big data highlights the importance of studying and evaluating its various behavioral patterns.

Scientific instruments and data analytics applications deal with the challenges posed by big

datasets, which present difficulties in terms of mobility, storage, and processing. Compression,

whether lossless or lossy, develops as a possible solution to tackle these issues. Numerous

applications based on compression techniques, seek to reduce data volumes.

TEZip stands out as a DNN-driven compressor for processing time-evolving data. Functioning on the principle of prediction, TEZip predicts the succeeding data frame passing information from the preceding frame. It then efficiently stores the variance between this prediction and the actual next frame as part of its compression strategy. On the other hand, LibPressio serves as a comprehensive abstraction layer encompassing various compressors.

Facilities like SPring-8, LCLS-II, SNS, and various other instruments rely on software developed in C and C++, generating extensive amounts of time-evolving data at a rapid pace. TEZip, a deep neural network (NN)-based compressor designed for compression of time-evolving data, is implemented in Python, posing challenges for seamless utilization and portability to C++. This challenge extends to other compressors, such as LinLogCompress.jl in Julia and those leveraging PyTorch/TensorFlow, for instance, the autoencoder based compressor.

To seamlessly integrate TEZip and LibPressio, a robust bridge needs to be constructed between Python and C++ environments. This undertaking is driven by the dual goals of ensuring effective collaboration between the two environments and prioritizing efficiency, especially in the realm of high-performance computing. We’ve done the integration initial TEZip and Libpressio to increase the usability. Metrics can be generated for TEZip compression and decompression via LibPressio. TEZip compression ratios are higher than all other compressors. TEZip’s compression ratio (Error Bound 1e-06) for Hurricane Isabel is 128 which is 2.4 times greater than the leading SZ3’s, 52.8. - 7Single-reference coupled cluster theory for systems with strong correlation extended to excited states

Shota Tsuru (RIKEN R-CCS)*; Stanislav Kedžuch (RIKEN R-CCS); Takahito Nakajima (RIKEN R-CCS) -

Coupled cluster (CC) theory has size-extensivity and is regarded as “gold standard” of

electronic structure theory based on wave functions. Nevertheless, conventional CC theory

referenced to a single Slate determinant of the restricted Hartree-Fock (RHF) theory is troublesome

for systems with strong correlations, such as molecules in transition states and systems with

partially filled d-or f-orbitals, due to instability of the reference. Although multireference (MR)

CC is simple idea as adaptation of CC theory to systems with strong correlations, some technical

difficulties inherent to MR-CC hamper formulation of a theory applicable to general systems and

the extension to excited states.

Once spin-singlet and triplet pairs have been decoupled and the spin-triplet pairs have been removed in double electronic excitation of CCSD referenced to a single Slater determinant of the unrestricted Hartree-Fock (UHF) method, the stability and symmetry dilemma is solved and the modified CCSD method correctly behaves in dissociation limits and transition states. The CCSDlevel accuracy, which is once lost due to removal of the spin-triplet pairs double excitation, is recovered by recoupling double excitations of both the spin multiplicity. This modified CCSD theory named FSigCCSD implicitly describes static correlation related to the spin-triplet instability of the RHF reference employing the algorithms developed for the conventional CCSD theory.

This time, we have extended the FSigCCSD theory to excited states in the equation-of-motion scheme. The present work is a step towards a dynamics simulation method applicable to general chemical processes. - 8Parameterization of lipid-protein interactions in the iSoLFv2 model

Diego Ugarte (RIKEN R-CCS)*; Shoji Takada (Kyoto University); Yuji Sugita (RIKEN R-CCS,RIKEN BDR, RIKEN CPR) - Transmembrane proteins play essential roles in several biological processes. A possible regulation mechanism for these proteins is their selective partitioning inside lipid domains. However, depending on the study target, performing all-atom (AA) simulations for studying transmembrane protein partitioning requires unreachable simulation times, even using modern hardware. In this study, we present the current state of our latest parameterization of the lipidprotein interactions for the recently developed iSoLFv2 coarse-grained (CG) model. This new parameterization will enable the usage of iSoLFv2 together with the AICG2+ and HPS CG protein model in the GENESIS molecular dynamics software to perform large-scale simulations of biological membrane systems and membrane-regulated phenomena.

- 9Potential for improving ensemble weather forecasting using mixed floating-point numbers

Tsuyoshi Yamaura (RIKEN R-CCS)* - The purpose of this study is to improve forecast accuracy by using low-precision floating-point arithmetic to prevent ensemble spread shrinkage when performing ensemble weather forecasting. Low-precision floating-point arithmetic is reproduced using a software emulator developed to allow the bit length of the mantissa of floating-point numbers to be adjusted in one-bit increments. First, we compared and evaluated ensemble methods using low-precision floating-point arithmetic according to the initial value ensemble method and the model ensemble method. The low-precision floating-point ensemble method was found to be unsuitable for the initial value ensemble method because it acts like a Gaussian noise and the ensemble spread does not expand much. The model ensemble method was found to have a similar ensemble spread as the conventional ensemble method. In order to objectively evaluate the ensemble method using low-precision floating-point arithmetic in accordance with the model ensemble method, ensemble forecasting experiments were conducted in combination with the conventional ensemble method. As a result, the combined ensemble forecast had a higher objective value than the ensemble forecast using only the conventional ensemble method and the ensemble method using lowprecision floating-point arithmetic. The reasons why the ensemble forecasts were higher when incorporating low-precision floating-point ensemble methods are considered: weather forecast models are not able to reproduce weather phenomena below the grid scale due to their low spatiotemporal resolution, and some models incorporate statistical assumptions to reduce computational load, which suppress the random nature of weather phenomena than actual weather events. On the other hand, ensemble methods using low-precision floating-point arithmetic can compensate for this randomness, and thus are expected to have higher evaluation values. Although the theoretical validation of this study was conducted using a software emulator, this suggests that low-precision floating-point arithmetic can also be implemented in hardware by using FPGAs, which may allow for faster operations without compromising forecast accuracy in ensemble forecasting.

- 10Improving the short-range predictability of severe convective storms using a 1000-member ensemble Kalman filter with 30-second update using multi-parameter phased array weather radar observations

James Taylor (RIKEN R-CCS)*; A. Amemiya (RIKEN R-CCS); S. Otsuka (RIKEN R-CCS); T. Honda (RIKEN R-CCS); Y. Maejima (RIKEN R-CCS); T. Miyoshi (RIKEN R-CCS) - High precision forecasting of convective weather systems remains extremely challenging owing to their highly non-linear, rapid evolution and involving of small-scale processes and fine-scaled features. Here, we present results of 30-minute precipitation forecasts for a convective system that passed over Tokyo generated from an experimental real-time NWP modeling system that uses a 1000-member ensemble Kalman filter with a 30-second update using observations from a multi-parameter phased array weather radar (MP-PAWR). The system successfully predicted rapid changes to the storm’s structure and intensity, and accurately predicted the location of heaviest rainfall up to 30-minute lead times. A comparative analysis of forecasts initialized during a period when the convective system was undergoing development showed the NWP model consistently outperforming nowcasts generated from an advection-based model at up to 30-minute lead times. The 30-second update was found to be crucial for improving rain forecasts through increased moistening and upward motion in the storm environment.

- 11Power Consumption Metric on Heterogeneous Memory Systems

Andres Xavier Rubio Proano (Riken R-CCS)*; Kento Sato (Riken R-CCS) -

Over the years, the architecture of supercomputers has evolved to support an increasing

number of applications aimed at addressing problems of interest to humanity. This evolution has

recently embraced the concept of heterogeneity, taking into account two aspects. Firstly, in

processing, the utilization of various processing elements such as the Central Processing Unit

(CPU), Graphic Processing Unit (GPU), and other accelerators coexisting within the same machine.

Secondly, on the memory-storage side, memory systems now incorporate more than one type of

memory, giving rise to Heterogeneous Memory Systems (HMS). For instance, in Sapphire Rapids,

one can observe the support of High Bandwidth Memory (HBM), non-volatile memory (NVM),

and also Dynamic Random-Access Memory (DRAM). This complexity complicates current and

future applications, given the trend toward more memory-bound applications. Those applications

utilize the memory system in ways that may not appropriately leverage the type of memory when

dealing with HMS.

Memory devices exhibit different properties that allow for better performance depending on the nature of the applications, such as latency, bandwidth, capacity, persistence, and power consumption. This research is specifically focused on power consumption, motivated by scenarios requiring the use of memory with the lowest power consumption target or a balance between power consumption and application performance. All of this is within the context of High-Performance Computing (HPC) power capping policies, necessitating different ways of utilizing hardware. In fact, data center runs complex simulation e.g. weather forecasting. In these applications, the interplay between computational power and power consumption efficiency becomes pivotal. Failure to manage power consumption effectively may result in exceeding power caps, leading to performance throttling or, in extreme cases, system shutdowns. Moreover, in environments where energy costs are a significant concern, understanding power consumption allows organizations to optimize operational expenses. By strategically utilizing different types of memory with varying power characteristics, it becomes possible to strike a balance between computational performance and power consumption efficiency.

For developers, the task of programming new applications or adapting existing ones requires a comprehensive understanding of the memory system. Without a specific strategy, this task can be highly complex, depending on the conditions under which the applications need to run. It is pertinent for developers to prepare their applications to effectively handle at least the main HMS setups. For this reason, we argue that every developer should possess a basic understanding of HMSs in terms of simple and accessible metrics such as bandwidth, latency, capacity, data persistence, power consumption, etc. Crucially, developers need to know how much memory power their applications will consume in a given memory system. This knowledge becomes vital in situations where executions need to be performed in minimal power consumption mode or when balancing power consumption and performance is crucial.

To understand memory performance, we have devised a methodology to characterize power consumption within an HMS, enabling their ranking. Initially, we simplified the exposure of the memory system to applications using hwloc, the de facto standard for discovering hardware topology. Subsequently, we employed pro ling techniques for applications. Our challenge has been that performance counters often only allow the exposure of memory socket power values, meaning we could not differentiate between the power values of, for example, DRAM or NVM separately. To address this issue, our strategy involves binding the entire process to the corresponding memory target and then, by considering idle power values, deducing that the corresponding value corresponds to a specific memory type.

For this, we have extensively tested our strategy in a cluster with heterogeneous memory by using different benchmark applications where we were able to rank our memory system by application. This information is crucial in the path of adapting, porting and developing applications that seek to use hardware resources depending of the system limitations. Our strategy jumps the limitation of having systems with performance counters that cannot differentiate in between memory kinds. - 12Impact of atmospheric forcing on SST biases in the LETKF-based Ocean Research Analysis (LORA)

Shun Ohishi (RIKEN R-CCS)*; Takemasa Miyoshi (RIKEN R-CCS); Misako Kachi (JAXA EORC) -

Various ocean analysis products have been produced by research institutions and used

for geoscience research. In the Pacific region, to our best knowledge, there are four high-resolution

regional analysis datasets [JCOPE2M (Miyazawa et al. 2017) and FRA-ROMS II (Kuroda et al.

2017) with 3D-VAR; NPR-4DVAR (Hirose et al. 2019); and DREAMS with Kalman filter (Hirose

et al. 2013)], but there is no ensemble Kalman filter (EnKF)-based analysis dataset.

Recently geostationary satellites have provided sea surface temperatures (SSTs) at higher spatiotemporal resolution than before. To take advantage of such observations, we have developed an EnKF-based ocean data assimilation system with a short assimilation interval of 1 day and demonstrated that the combination of three schemes [incremental analysis update (IAU; Bloom et al. 1996), relaxation to-prior perturbation (RTPP; Zhang et al. 2004), and adaptive observation error inflation (AOEI; Minamide and Zhang 2017)] significantly improves geostrophic balance and analysis accuracy (Ohishi et al. 2022a, b). With the recent enhancement of computational resources, we have developed higher-resolution ocean data assimilation systems sufficient to resolve fronts and eddies and produced ensemble analysis products in the western North Pacific (WNP) and Maritime Continent (MC) regions referred to as the LETKF-based Ocean Research Analysis (LORA)-WNP and -MC, respectively (Ohishi et al. 2023). The validation results show that the LORA has sufficient accuracy for geoscience research and various applications. However, high SST biases over 1.0 °C are detected near the coastal regions, where coarse atmospheric reanalysis datasets might not accurately capture the coastlines. Therefore, this study aims to investigate the impacts of atmospheric forcing on the nearshore SST biases and to examine the mechanisms of the improvement of the SST biases.

We have conducted sensitivity experiments of the atmospheric forcing using atmospheric reanalysis datasets from JRA-55 (Kobayashi et al. 2015) and JRA55-do (Tsujino et al. 2018) with horizontal resolution of 1.25° and 0.5°, respectively, which are referred to as the JRA55 and JRA55do runs. We note that the setting of the JRA55 run is the same as Ohishi et al. (2023) and that the JRA55-do is a surface atmospheric dataset for driving ocean-sea ice models and is created by adjusting JRA-55 toward high-quality reference datasets such as CERES-EBAF-Surface_Ed2.8 data (Kako et al. 2013).

The validation results show that the SST biases and RMSDs relative to assimilated satellite and independent in-situ coastal data are improved in the JRA55do run, especially near the coastal regions. The mixed layer temperature budget analysis indicates that stronger latent heat release by nearshore stronger wind speed and weaker downward shortwave radiation by the adjustment in JRA55do is the main cause of the improvement of the high SST biases in September-October. This results in further improvement in November-January, because the smaller absolute innovation reduces the frequency of the AOEI application. Consequently, cooling in the analysis increments is stronger in the JRA55do run. This study indicates the importance of the quality of atmospheric forcing for EnKF-based ocean data assimilation systems. It would be important to keep access to surface atmospheric datasets for driving ocean-sea ice models. - 13Parametrized quantum circuit for weight-adjustable quantum loop gas

Rongyang Sun (RIKEN R-CCS)*; Tomonori Shirakawa (RIKEN R-CCS); Seiji Yunoki (RIKEN R-CCS) - Topological quantum phases emerge from correlated quantum many-body systems containing novel features such as nontrivial entanglement structure and mutual statistics. However, the co-emerged exponential computational complexity strongly hampers the research of these systems using classical computers. Present booming quantum computing techniques offer a new way to investigate these challenging systems: the quantum simulation approach. Combining current available noise intermediatescale quantum (NISQ) devices with variational quantum eigen solver (VQE) algorithm to solve quantum many-body problems has attracted extensive attention. In this poster presentation, I will explain how to realize scalable VQE calculation in the intrinsic topologically ordered phase by designing problem specified scalable parameterized quantum circuit (PQC) Ansatze. We construct a real-device-realizable PQC that can represent a weight-adjustable quantum loop gas (denoted as PLGC Ansatz) to study the toric code model in an external magnetic field (named TCM, non-exactly solvable) and obtain accurate ground states (see Fig. 1) of the system with different sizes in the VQE simulation on classical computers.

- 14Python vs C on the A64FX processor : A case study from quantum circuit synthesis

Miwako Tsuji (RIKEN R-CCS)*; Sahe Ashhab (National Institute of Information and Communications Technology); Kouichi Semba (The University of Tokyo); Mitsuhisa Sato (RIKEN R-CCS) -

Python is a widely adopted programming language in scientific and high-performance

computing. Since Python is an interpreted language, the performance characteristics of Python are

different from traditional HPC languages such as C and Fortran. Python code is often slower than

equivalent code in other languages. Several Python modules, such as NumPy and Scipy, use

optimized and compiled mathematical libraries internally to fill the performance gap.

In this paper, we study the performance of a Python quantum circuit synthesis code on the A64FX processor and compare it with an equivalent code written in C. The quantum circuit synthesis algorithm that we use is a random search technique to find quantum gate sequences that implement perfect quantum state preparation or unitary operator synthesis with arbitrary targets. This approach is based on the recent discovery that a large multiplicity of quantum circuits achieve unit fidelity in performing the desired target operation, which means that the quantum circuit synthesis problem has a large number of solutions and a random search approach is well suited for this problem. The code generates a certain number of random circuits, typically 100 circuits, and optimizes single-qubit rotation parameters by a modified version of the gradient ascent pulse engineering (GRAPE) algorithm. The GRAPE algorithm is an iterative method, and each iteration involves numerous double-complex matrix-matrix operations, i.e. zgemm operations. Firstly, we evaluate the performance of zgemm operations in Python and C by changing the size of the matrix and the number of threads. Python and C zgemm codes use Scientific Subroutine Library II (SSL2), a thread-safe numerical calculation library highly optimized for the A64FX processor.

Then, we evaluate the performance of the quantum circuit synthesis codes written in C and Python by changing the number of qubits and the number of threads. In our experiments, the performance of the Python code is slightly worse than that of the C code in a single-thread execution. Increasing the number of threads makes the gap larger since computations other than zgemm in the Python code become dominant. - 15Streamlined data analysis in Python

David A Clarke (University of Utah); Jishnu Goswami (RIKEN RCCS)* -

In this poster, we will present our publicly available AnalysisToolbox

(https://github.com/LatticeQCD/AnalysisToolbox) for statistical data analysis in Python and how

this can be run on supercomputers by overcoming the slowness of the scripting language. Python

is an exceptionally user-friendly language, ideal for data analysis, largely due to its accessibility

and robust, well-maintained libraries such as NumPy and SciPy.

However, in the realm of data analysis, these libraries lack some necessary functionalities. Additionally, scripting languages generally run slower than compiled languages. To address these issues partially, we introduce Analysis-Toolbox. This suite of Python modules is specifically designed to streamline data analysis for physics problems. Key features of AnalysisToolbox are, General mathematics: Numerical differentiation, convenience wrappers for SciPy numerical integration and solving IVPs; General statistics: Jackknife, bootstrap, Gaussian bootstrap, error propagation, estimate integrated autocorrelation time, and curve fitting with and without Bayesian priors. The math and statistics methods are generally useful, independent of physics contexts; General physics: Unit conversions, critical exponents for various universality classes, physical constants, Ising model in arbitrary dimensions; Lattice QCD: Continuum-limit extrapolation, Polyakov loop observables, SU(3) gauge fields, reading in gauge fields, and the static quarkantiquark potential. These methods rather target lattice QCD; QCD physics: Hadron resonance gas model, QCD equation of state, and the QCD beta function. These methods are useful for QCD phenomenology, independent of lattice QCD contexts. - 16Unleashing CGRA's Potential for HPC

Boma Anantasatya Adhi (RIKEN R-CCS)*; Emanuele Del Sozzo (RIKEN R-CCS); Carlos Cortes (RIKEN R-CCS); Tomohiro Ueno (RIKEN R-CCS); Kentaro Sano (RIKEN R-CCS) - This poster highlights our previous and future design-space exploration effort to optimize our Coarse-Grained Reconfigurable Array (CGRA) architecture for HPC, i.e., intraCGRA interconnect optimization, FMA and transcendental operation on CGRA, programmable buffer, systolic-array style execution on CGRA, predication support, and FPGA based emulation on actual HPC environment.

- 17The effect of fermions on the emergence of (3+1)-dimensional space-time in the Lorentzian type IIB matrix model

Konstantinos N. Anagnostopoulos (NTUA); Takehiro Azuma (Setsunan University)*; Kohta Hatakeyama (Kyoto University); Mitsuaki Hirasawa (Universita degli Studi di Milano-Bicocca); Jun Nishimura (KEK & SOKENDAI); Stratos Papadoudis (NTUA); Asato Tsuchiya (Shizuoka University) - The type IIB matrix model, also known as the IKKT model, is a promising candidate for the non-perturbative formulation of string theory. Its Lorentzian version, in which indices are contracted using the Lorentzian metric, has a sign problem stemming from eiS in the partition function (where S is the action). It has turned out that the Lorentzian version is equivalent to the Euclidean one, in which the SO(10) rotational symmetry is spontaneously broken to SO(3), under the Wick rotation as it is. This leads us to add a Lorentz-invariant mass term to the Lorentzian version of the type IIB matrix model. To cope with the sign problem, we perform numerical simulations based on the complex Langevin Method (CLM), relying on stochastic processes for complexified variables. In order to avoid the “singular drift problem”, we add a fermionic mass term. To compensate for the reduced effect of fermions by the aforementioned fermionic mass term and mimic the SUSY cancellation, we introduce parameters to control the quantum fluctuations of the bosonic matrices, so that the (9 - d) spatial directions are suppressed and the emergent space is restricted to at most d dimensions (d = 4, 5, 6, 7, 8). We observe a (3+1)- dimensional space-time, with 3 out of the d spatial dimensions expanding at late time.

- 18Towards PowerAPI and KVS-based Energy-Aware Image-based In-Situ Visualization on the Fugaku

Razil Bin Tahir (University of Malaya)*; Jorji Nonaka (RIKEN R-CCS); Ken Iwata (Kobe University); Taisei Matsushima (Kobe University); Naohisa Sakamoto (RIKEN R-CCS); Chongke Bi (Tianjin university); Masahiro Nakao (RIKEN R-CCS); Hitoshi Murai (RIKEN R-CCS) -

Energy efficiency has become a serious concern when running applications on HPC

systems. Although these systems were designed to mainly run simulation codes as fast as possible,

due to the ever-increasing size of the simulation outputs, the in situ visualization has gained

increasing attention. In situ visualization uses the same HPC system to execute a part or even the

entire visualization processing, and there are currently a variety of tools and libraries that facilitate

domain scientists to integrate them with their simulation codes. Among different approaches,

image- and video-based in situ visualization has been widely adopted as an effective approach for

the subsequent offline visual analysis. In this approach, a large number of renderings are required

at every visualization time step and can consume a considerable computational resource. Fugaku

adopted PowerAPI, which enables the users to set the power mode for their jobs. However,

simulation and visualization codes may have different processing behaviors requiring different

power settings for obtaining the most energy-efficient runnings.

We have investigated the computational cost and energy consumption of some rendering techniques by using the PowerAPI and KVS (Kyoto Visualization System) on the Fugaku. Since the power mode set for the simulation process may not be the best choice for the visualization step, we have focused on evaluating the power modes for the visualization processing. Given Power API’s capability to adjust power settings while a job is in progress in the tightly-coupled visualization, it becomes possible to change the power setting for visualization independently of the simulation. Thus, it may make both processes more energy efficient, as shown in Fig. 1. From the HPC operational side, we should emphasize that opportunities to save energy from the visualization steps should also be taken seriously when adopting in situ visualization.

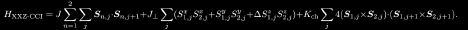

In this poster, we shed light on the energy efficiency of the visualization portion that was not considered before, and hope that the obtained findings will be useful for potential users looking to run in situ visualization on the Fugaku and other PowerAPI-enabled HPC systems. - 19Symmetry, topology, duality, chirality, and criticality in a spin-1/2 XXZ ladder with a four-spin interaction.

Mateo Fontaine (Keio University)*; Koudai Sugimoto (Keio University); Shunsuke Furukawa (Keio University) -

We study the ground-state phase diagram of a spin- 1/2 XXZ model with a chiralitychirality interaction (CCI) on a two-leg ladder, which is described by the Hamiltonian

This model offers a minimal setup to study an interplay between spin and chirality degrees of freedom and is closely related to a model with four-spin ring exchange. The spin-chirality duality transformation allows us to relate the regimes of weak and strong CCIs. By applying the Abelian bosonization and the duality, we obtain a rich phase diagram that contains distinct gapped featureless and ordered phases. In particular, Neel and vector chiral orders appear for easy-axis anisotropy, while two distinct symmetry protected topological (SPT) phases appear for easy-plane anisotropy. The two SPT phases can be viewed as twisted variants of the Haldane phase. We perform numerical simulations based on infinite density-matrix renormalization group to confirm the predicted phase structure and critical properties. We further demonstrate that the two SPT phases and a trivial phase are distinguished by topological indices in the presence of certain symmetries. - 20Thicket: Seeing the Performance Experiment Forest for the Individual Run Trees

Stephanie Brink (LLNL); Michael McKinsey (Texas A&M University); David Boehme (LLNL); Connor Scully-Allison (University of Utah); Ian Lumsden (University of Tennessee); Daryl Hawkins (Texa A&M University); Katherine E. Isaacs (University of Utah); Michela Taufer (University of Tennessee); Olga Pearce (LLNL)* -

Thicket is an open-source Python toolkit for Exploratory Data Analysis (EDA) of multirun performance experiments. It enables an understanding of optimal performance configuration

for largescale application codes. Most performance tools focus on a single execution (e.g., single

platform, single measurement tool, single scale). Thicket bridges the gap to convenient analysis in

multidimensional, multi-scale, multi-architecture, and multi-tool performance datasets by

providing an interface for interacting with the performance data.

Thicket has a modular structure composed of three components. The first component is a data structure for multi-dimensional performance data, which is composed automatically on the portable basis of call trees, and accommodates any subset of dimensions present in the dataset. The second is the metadata, enabling distinction and sub-selection of dimensions in performance data. The third is a dimensionality reduction mechanism, enabling analysis such as computing aggregated statistics on a given data dimension. Extensible mechanisms are available for applying analyses (e.g., top-down on Intel CPUs), data science techniques (e.g., K-means clustering from scikit-learn), modeling performance (e.g., Extra-P), and interactive visualization. We demonstrate the power and flexibility of Thicket through two case studies, first with the open-source RAJA Performance Suite on CPU and GPU clusters and another with a large physics simulation run on both a traditional HPC cluster and an AWS Parallel Cluster instance. - 21Enhancing Meteorological Modelling: Integrating Dual Precipitation Radar Data into the NICAM-LETKF System for Improved Forecasting

Michael Goodliff (RIKEN)* - This project focuses on configuring the NICAM-LETKF system with a robust integration of Dual Precipitation Radar (DPR) observations from the Global Precipitation Measurement (GPM) core satellite. It involves the intricate setup and calibration of the Nonhydrostatic ICosahedral Atmospheric Model (NICAM) and Local Ensemble Transform Kalman Filter (LETKF) framework to effectively assimilate and leverage dual precipitation radar data on a 28km grid. This adaptation aims to significantly enhance the model's predictive capabilities by integrating crucial observational inputs. By establishing this refined system, the project aims to advance meteorological modelling techniques, offering improved accuracy and precision in forecasting weather patterns and associated weather phenomena. The poster presentation will outline the system's development, explain its functionality, and clarify future research prospects enabled by this framework.

- 22A rank-mapping optimization tool based on simulated annealing using MPI trace information

Akiyoshi Kuroda (RIKEN R-CCS); Yoshifumi Nakamura (RIKEN R-CCS); Kazuto Ando (RIKEN R-CCS)*; Hitoshi Murai (RIKEN R-CCS); Chisachi Kato (The University of Tokyo) - A rank-mapping optimization tool based on simulated annealing using MPI trace information is proposed. As an application, the fluid simulation software FrontFlow/Blue is targeted. Using an unstructured grid, this application solves the Navier-Stokes equation discretized with a finite element method. When an application of such an unstructured grid is executed in a distributed parallel manner, assigning the MPI process responsible for each subdomain of the entire computational domain to the physical coordinates of each node in the network topology is generally not continuous. As a result, the MPI communication between adjacent subdomains, socalled “adjacent communication,” is performed between physically distant nodes, and the communication performance deteriorates due to communication route congestion. This problem can occur in any directly connected network topology. To attack this problem, we implemented the method for optimizing the mapping of the rank of the MPI process to the physical coordinate of the node in the network topology. Our method is based on the simulated annealing to reduce the value of the evaluation function calculated using MPI communication trace log information. At present, rank mapping optimization has been performed for a maximum of 768 processes, and as a result, a communication time reduction of approximately 25% has been achieved. Although this tool is implemented assuming Fugaku’s network topology, it can be applied to general systems with a directly connected network.

- 23Bias-Exchange Adaptively Biased Molecular Dynamics for Dimerization of Amyloid β Precursor Protein

Shingo Ito (RIKEN CPR)*; Yuji Sugita (RIKEN CPR) - Replica-Exchange Umbrella Sampling (REUS), which is an on-grid exchange algorithm, is a powerful tool to calculate free energy along collective variables (CVs). However, it requires huge computational resources, especially for the free energy calculations on multidimensional CVs. We combined the bias-exchange, which is a non-grid exchange algorithm, with adaptively biased molecular dynamics (ABMD), which is a kind of metadynamics method, to decrease the computational resources while keeping the accuracy of free energy. This new method called Bias-Exchange Adaptively Biased Molecular Dynamics (BE-ABMD), showed good performance for the free energy calculation of dimerization of the amyloid b precursor protein as compared with conventional REUS, and it succeeded in dramatically decreasing the computational cost on 4D-CVs about less than 1% of the cost by REUS with the same accuracy.

- 24A rank-mapping optimization by smoothing network link traffic using information entropy

Akiyoshi Kuroda (RIKEN R-CCS)*; Kazuto Ando (RIKEN R-CCS); Yoshifumi Nakamura (RIKEN R-CCS); Hitoshi Murai (RIKEN R-CCS); Chisachi Kato (The University of Tokyo) - In order to reduce the communication time on the supercomputer Fugaku, we are currently trying to optimize communication time using rank-mapping using a general-purpose flow solver, which is based on Large eddy simulation (LES), FrontFlow/blue (FFB). The main communication in FFB is isend/irecv. The problem with the direct communication network (Tofu network topology) on the Fugaku is that communication conflict as the number of hops outside Tofu unit increases, and it was needed to avoid this problem. We are currently developing a general-purpose rank-mapping optimization annealing tools to reduce the number of hops for rank pairs that have a large amount of communication. At this time, the evaluation function to be annealed can have selected one defined by the number of hops. For more information on this topic, please refer to the other presentation "A rank-mapping optimization tool based on simulated annealing using MPI trace information". Furthermore, we also considered an evaluation function that considers the effective use of network links. To make effective use of network links, it is necessary to prevent communication from being localized to specific links. Rank-mapping space is a finite discrete space, and the number of states can be strictly counted, so it is possible to define and calculate information entropy regarding link usage. In this study, we attempted to maximize the information entropy calculated from the number of states of all link traffic. This annealing calculation made it possible to smooth the link usage rate. However, it is not possible to reduce the number of hops, and the communication flow rate cannot be reduced [Figure.1]. Furthermore, we defined free energy as an evaluation function using its calculated information entropy and attempted annealing using a constant temperature canonical ensemble. We will also report on a comparison with the annealing results using the Metropolis Monte Carlo method [Figure.1] that we have performed so far.

- 25Machine Learning of Observation Operator for Satellite Radiance Data Assimilation in Numerical Weather Prediction

Jianyu Liang (RIKEN R-CCS)*; Takemasa Miyoshi (RIKEN R-CCS); Koji Terasaki (Japan Meteorological Agency) - Data assimilation (DA) in Numerical Weather Prediction is the combination of weather forecast models and observations. It gives an optimal estimate of the initial condition of the model and improves its prediction. For DA, the observation operator (OO) is necessary to derive the model equivalent of the observations from the model variables. It is usually based on the physical relationships between the model variables and the observed variable, so that we call it physically based OO (P-OO). For satellite DA, the radiative transfer models such as RTTOV are used as POO for assimilating brightness temperature (BT) observations from satellites. However, gaining a comprehensive understanding of physical relationships could be time-consuming. Therefore, relying exclusively on P-OO could potentially constrain our capacity to utilize the abundance of new data as early as possible. Since machine learning (ML) is good at finding the complex relationships between variables given enough data, in this study, we propose an innovative method of using ML to build OO without knowing the physical relationships, which we call ML-OO. Our DA system contains the non-hydrostatic icosahedral atmospheric model (NICAM) and the local ensemble transform Kalman filter (LETKF). We used this system to assimilate 1-month conventional observations. Subsequently, the model forecasts after each analysis and BT from the Advanced Microwave Sounding Unit-A (AMSU-A) onboard different satellites were used to train ML models to obtain ML-OO. We tested its performance in the same month in another year. We first assimilated the conventional observations (experiment CONV). We then assimilated additional BT from AMSU-A using RTTOV as OO with online bias correction (experiment CONV-AMSUA-RTTOV). Finally, we assimilated the same observations as experiment CONVAMSUA-RTTOV using ML as OO and without online bias correction (experiment CONVAMSUA-ML). Using ECMWF Reanalysis v5 (ERA5) as the ground truth to calculate the RMSD of the temperature for different experiments, it showed that experiment CONV-AMSUA-ML is slightly worse than experiment CONV-AMSUA-RTTOV but substantially better than experiment CONV, which demonstrates that the ML-OO is effective. The training did not rely on any physically based OO, and this method is purely data-driven. It has great potential for other types of observations so that new observations can be assimilated as soon as possible.

- 26Deformable Systolic Array Platform on 2-D Meshed Virtual FPGA Planes

Tomohiro Ueno (RIKEN R-CCS)*; Emanuele Del Sozzo (RIKEN R-CCS); Kentaro Sano (RIKEN R-CCS) -

Reconfigurable devices, such as FPGAs, are expected to become the accelerators of

choice in HPC systems because of their flexibility and power efficiency. On the other hand, due

to limitations such as memory and network bandwidth and the amount of resources on the chip,

some effort will be required to deliver the high performance demanded by HPC applications. A

tightly coupled FPGA cluster, in which a dedicated network connects a large number of FPGA

devices, has been proposed as an answer to these requirements. Similar to traditional parallel

systems, FPGA clusters are oriented to achieve high performance through the cooperative

operation of each device. However, they face problems such as the need to develop an efficient

communication system among FPGAs, the lack of memory bandwidth, etc.

To improve the usability of such FPGA clusters, VCSN, which establishes virtual network connections between arbitrary FPGAs, has been proposed. We have built a VCSNbased system that integrates and operates virtually 2-D meshconnected FPGAs, which is not feasible in direct connections. We consider these virtual 2-D mesh-connected FPGAs as a huge computational plane on which we propose stream processing using highly efficient dedicated circuits. We propose a systolic array platform with arbitrarily customizable size and shape as a demonstration.

Our proposed deformable systolic array platform utilizes multiple FPGAs connected by a virtual network topology constructed by VCSN. Since the Intel PAC D5005 FPGA board used in this study has two network ports, only a one dimensional topology can be constructed by direct connections with common cables. However, the virtual link functionality provided by VCSN allows us to virtually construct a two dimensional mesh topology and form the virtual FPGA plane shown in Figure 1. Virtual links can be easily reconfigured, allowing the FPGA plane of the appropriate size and shape to be reconfigured in a fraction of the time required by the application.

As shown in Figure 1, this system provides scaleable memory bandwidth by simultaneous read/write to/from offchip memory on each FPGA board. Each FPGA board is installed on a CPU server, and the CPU drives a DMA controller on the FPGA to move data between the memory and the systolic array. We employ the MPI library to drive the DMA controllers on all the FPGAs to simultaneously operate the entire systolic array on multiple FPGAs. We implemented a simple wavefront systolic array with data queuing at each DPU for performance evaluation. The DPUs perform multiply and accumulate (MAC) operation when the input data from the two directions are aligned.

The performance evaluation with systolic arrays of various sizes and shapes, shown in Table I, showed that the operational performance increases in proportion to the number of FPGAs, although the overall performance is limited by the network bandwidth. In the future, we will evaluate the performance using real-world applications and improve the performance by implementing higher compute density. - 27A Control Simulation Experiment for Severe Rainfall Event in Hiroshima in August 2014

Yasumitsu Maejima (RIKEN R-CCS)*; Takemasa Miyoshi (RIKEN R-CCS) -

Abstract: To predict severe weather, convection resolving numerical weather prediction (NWP)

is effective. This study explores a Control Simulation Experiment (CSE) aimed at controlling

precipitation amount and locations to potentially prevent catastrophic disasters by simulating

different scenarios of interventions of small perturbations taking advantage of the chaotic nature

of severe weather dynamics. In this study, we perform a CSE using a regional NWP model

SCALE-RM for a severe rainfall event which caused catastrophic landslides and 77 fatalities in

Hiroshima, Japan on August 19 and 20, 2014.

We perform a 1-km-mesh, hourly-update, 50-member observing system simulation experiment (OSSE) for this rainfall event initialized at 0000 UTC August 18. This provides the initial conditions for a 6-hour ensemble forecast at 1500 UTC August 19. To create small perturbations to change the nature run, we take the differences of all model variables between an ensemble member having the heaviest rain and another ensemble member having the weakest rain. We normalize the perturbations so that the maximum wind speed is 0.1 m s-1. In this preliminary CSE, we try to control the heavy rainfall by giving the perturbations to the nature run in the OSSE at each time step from 1500 UTC to 1600 UTC on August 19, although the perturbations for all variables at all grid points are something beyond human’s engineering capability. In the nature run, 6-hour accumulated rainfall amount from 1500 UTC to 2100 UTC reaches 210 mm at peak. By contrast, the rainfall amount decreases to 118 mm in the CSE. We plan to apply limitations to the perturbations. - 28Performance Evaluation of Multi-Precision Conjugate Gradient Method in CPU/GPU Environment Using SYCL

Takuya Ina (Japan Atomic Energy Agency)*; Yasuhiro Idomura (Japan Atomic Energy Agency); Toshiyuki Imamura (RIKEN R-CCS) -

State-of-the-art supercomputers are based on CPUs/GPUs with a wide variety of

architectures, including Nvidia, AMD, and Intel. Each manufacturer provides its own

programming environment for its architecture: Nvidia provides CUDA, AMD provides HIP, and

Intel provides DPC++. Therefore, it is necessary to develop a code using different programming

environments for each supercomputer.

In addition, low-precision floating-point number arithmetic performance has become several times higher than double-precision floating-point number arithmetic performance due to the high computing needs for machine learning, and low-precision floating-point number arithmetic performance is becoming more important. However, each architecture has a different hardwaresupport for floating-point number types. Therefore, there is a problem that when unsupported floating-number types are used, the calculation cannot be performed or the performance is degraded due to software emulation.

Thus, the recommended programming environment and available floating-point types differ depending on the architecture, making it difficult to develop a common code for multiple architectures. Although it is possible to create architecture-independent codes by using OpenMP and language standards such as C++ stdpar and do concurrent for Fortran, it is difficult to efficiently execute fine grained parallel processing using thousands of threads. In addition, the performance-aware libraries such as Kokkos and RAJA, which abstract parallel processing for easy code portability between architectures, are not available on some architecture. For example, they are not available on Fugaku and FX1000.

DPC++, Intel's preferred programming model, is an implementation of a programming language called SYCL, which is a portable programming language standardized by the Khronos group and based on C++, allowing a single source code to run on multiple CPUs/GPUs. In addition, since there are multiple implementations of SYCL, performance improvement can be expected by selecting an implementation suitable for the architecture and the algorithms used. In addition, since Intel supports DPC++, efforts and information on SYCL will be widely available in the future.

In this study, we evaluated the performance of the multiple-precision conjugate gradient (CG) solver using SYCL, with sparse matrix storage formats of Compressed Row Storage (CRS) and Diagonal Storage (DIA) for the 3-D Poisson equation. We tested the CG solver with half-precision (fp16), single-precision (fp32), and double-precision (fp64).

On the Intel CPU Cascade Lake environment, performance gains of fp16 were respectively 0.69x and 0.47x in CRS and DIA formats compared to fp32 due to the lack of hardware support for fp16. However, fp32 solvers with CRS and DIA formats were respectively 1.78x and 2.03x faster than fp64, and fp64 showed the same level of performance as OpenMP in both CRS and DIA formats. On the FX1000 environment, the performance of the CRS format was almost the same for fp16, fp32, and fp64, and fp64 was 0.85x slower than OpenMP. However, in DIA format, fp16 was 1.45x faster than fp32 and fp32 was 1.75x faster than fp64, showing reasonable performance gains. fp64 was confirmed to have the same performance as OpenMP. On the Nvidia A100 environment, fp16 was 1.38x and 1.44x faster than fp32 in CRS and DIA formats, respectively. fp32 was 1.47x faster than fp64 both in CRS and DIA formats. fp64 was confirmed to have the same performance as CUDA. - 29Acceleration of gREST simulations on Fugaku supercomputer